Examples

8-bit (1.4.3)

A minifloat in 1 byte (8 bit) with 1 sign bit, 4 exponent bits and 3 significand bits (1.4.3) is demonstrated here. The exponent bias is defined as 7 to center the values around 1 to match other IEEE 754 floats [11] [12] so (for most values) the actual multiplier for exponent x is 2x−7. All IEEE 754 principles should be valid. [13] This form is quite common for instruction.[ citation needed ]

| Sign | Exponent | Significand | |||||

|---|---|---|---|---|---|---|---|

| 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

Zero is represented as zero exponent with a zero mantissa. The zero exponent means zero is a subnormal number with a leading "0." prefix, and with the zero mantissa all bits after the decimal point are zero, meaning this value is interpreted as . Floating point numbers use a signed zero, so is also available and is equal to positive .

0 0000 000 = 0 1 0000 000 = −0

For the lowest exponent the significand is extended with "0." and the exponent value is treated as 1 higher like the least normalized number:

0 0000 001 = 0.0012 × 21 - 7 = 0.125 × 2−6 = 0.001953125 (least subnormal number) ... 0 0000 111 = 0.1112 × 21 - 7 = 0.875 × 2−6 = 0.013671875 (greatest subnormal number)

All other exponents the significand is extended with "1.":

0 0001 000 = 1.0002 × 21 - 7 = 1 × 2−6 = 0.015625 (least normalized number) 0 0001 001 = 1.0012 × 21 - 7 = 1.125 × 2−6 = 0.017578125 ... 0 0111 000 = 1.0002 × 27 - 7 = 1 × 20 = 1 0 0111 001 = 1.0012 × 27 - 7 = 1.125 × 20 = 1.125 (least value above 1) ... 0 1110 000 = 1.0002 × 214 - 7 = 1.000 × 27 = 128 0 1110 001 = 1.0012 × 214 - 7 = 1.125 × 27 = 144 ... 0 1110 110 = 1.1102 × 214 - 7 = 1.750 × 27 = 224 0 1110 111 = 1.1112 × 214 - 7 = 1.875 × 27 = 240 (greatest normalized number)

Infinity values have the highest exponent, with the mantissa set to zero. The sign bit can be either positive or negative.

0 1111 000 = +infinity 1 1111 000 = −infinity

NaN values have the highest exponent, with the mantissa non-zero.

s 1111 mmm = NaN (if mmm ≠ 000)

This is a chart of all possible values for this example 8-bit float:

| … 000 | … 001 | … 010 | … 011 | … 100 | … 101 | … 110 | … 111 | |

|---|---|---|---|---|---|---|---|---|

| 0 0000 … | 0 | 0.001953125 | 0.00390625 | 0.005859375 | 0.0078125 | 0.009765625 | 0.01171875 | 0.013671875 |

| 0 0001 … | 0.015625 | 0.017578125 | 0.01953125 | 0.021484375 | 0.0234375 | 0.025390625 | 0.02734375 | 0.029296875 |

| 0 0010 … | 0.03125 | 0.03515625 | 0.0390625 | 0.04296875 | 0.046875 | 0.05078125 | 0.0546875 | 0.05859375 |

| 0 0011 … | 0.0625 | 0.0703125 | 0.078125 | 0.0859375 | 0.09375 | 0.1015625 | 0.109375 | 0.1171875 |

| 0 0100 … | 0.125 | 0.140625 | 0.15625 | 0.171875 | 0.1875 | 0.203125 | 0.21875 | 0.234375 |

| 0 0101 … | 0.25 | 0.28125 | 0.3125 | 0.34375 | 0.375 | 0.40625 | 0.4375 | 0.46875 |

| 0 0110 … | 0.5 | 0.5625 | 0.625 | 0.6875 | 0.75 | 0.8125 | 0.875 | 0.9375 |

| 0 0111 … | 1 | 1.125 | 1.25 | 1.375 | 1.5 | 1.625 | 1.75 | 1.875 |

| 0 1000 … | 2 | 2.25 | 2.5 | 2.75 | 3 | 3.25 | 3.5 | 3.75 |

| 0 1001 … | 4 | 4.5 | 5 | 5.5 | 6 | 6.5 | 7 | 7.5 |

| 0 1010 … | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 |

| 0 1011 … | 16 | 18 | 20 | 22 | 24 | 26 | 28 | 30 |

| 0 1100 … | 32 | 36 | 40 | 44 | 48 | 52 | 56 | 60 |

| 0 1101 … | 64 | 72 | 80 | 88 | 96 | 104 | 112 | 120 |

| 0 1110 … | 128 | 144 | 160 | 176 | 192 | 208 | 224 | 240 |

| 0 1111 … | Inf | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 1 0000 … | −0 | −0.001953125 | −0.00390625 | −0.005859375 | −0.0078125 | −0.009765625 | −0.01171875 | −0.013671875 |

| 1 0001 … | −0.015625 | −0.017578125 | −0.01953125 | −0.021484375 | −0.0234375 | −0.025390625 | −0.02734375 | −0.029296875 |

| 1 0010 … | −0.03125 | −0.03515625 | −0.0390625 | −0.04296875 | −0.046875 | −0.05078125 | −0.0546875 | −0.05859375 |

| 1 0011 … | −0.0625 | −0.0703125 | −0.078125 | −0.0859375 | −0.09375 | −0.1015625 | −0.109375 | −0.1171875 |

| 1 0100 … | −0.125 | −0.140625 | −0.15625 | −0.171875 | −0.1875 | −0.203125 | −0.21875 | −0.234375 |

| 1 0101 … | −0.25 | −0.28125 | −0.3125 | −0.34375 | −0.375 | −0.40625 | −0.4375 | −0.46875 |

| 1 0110 … | −0.5 | −0.5625 | −0.625 | −0.6875 | −0.75 | −0.8125 | −0.875 | −0.9375 |

| 1 0111 … | −1 | −1.125 | −1.25 | −1.375 | −1.5 | −1.625 | −1.75 | −1.875 |

| 1 1000 … | −2 | −2.25 | −2.5 | −2.75 | −3 | −3.25 | −3.5 | −3.75 |

| 1 1001 … | −4 | −4.5 | −5 | −5.5 | −6 | −6.5 | −7 | −7.5 |

| 1 1010 … | −8 | −9 | −10 | −11 | −12 | −13 | −14 | −15 |

| 1 1011 … | −16 | −18 | −20 | −22 | −24 | −26 | −28 | −30 |

| 1 1100 … | −32 | −36 | −40 | −44 | −48 | −52 | −56 | −60 |

| 1 1101 … | −64 | −72 | −80 | −88 | −96 | −104 | −112 | −120 |

| 1 1110 … | −128 | −144 | −160 | −176 | −192 | −208 | −224 | −240 |

| 1 1111 … | −Inf | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

There are only 242 different non-NaN values (if +0 and −0 are regarded as different), because 14 of the bit patterns represent NaNs.

To convert to/from 8-bit floats in programming languages, libraries or functions are usually required, since this format is not standardized. For example, here is an example implementation in C++ (public domain).

8-bit (1.4.3) with B = −2

At these small sizes other bias values may be interesting, for instance a bias of −2 will make the numbers 0–16 have the same bit representation as the integers 0–16, with the loss that no non-integer values can be represented.

0 0000 000 = 0.0002 × 21 - (-2) = 0.0 × 23 = 0 (subnormal number) 0 0000 001 = 0.0012 × 21 - (-2) = 0.125 × 23 = 1 (subnormal number) 0 0000 111 = 0.1112 × 21 - (-2) = 0.875 × 23 = 7 (subnormal number) 0 0001 000 = 1.0002 × 21 - (-2) = 1.000 × 23 = 8 (normalized number) 0 0001 111 = 1.1112 × 21 - (-2) = 1.875 × 23 = 15 (normalized number) 0 0010 000 = 1.0002 × 22 - (-2) = 1.000 × 24 = 16 (normalized number)

8-bit (1.3.4)

Any bit allocation is possible. A format could choose to give more of the bits to the exponent if they need more dynamic range with less precision, or give more of the bits to the significand if they need more precision with less dynamic range. At the extreme, it is possible to allocate all bits to the exponent (1.7.0), or all but one of the bits to the significand (1.1.6), leaving the exponent with only one bit. The exponent must be given at least one bit, or else it no longer makes sense as a float, it just becomes a signed number.

Here is a chart of all possible values for (1.3.4). M≥ 2E − 1 ensures that the precision remains at least 0.5 throughout the entire range. [14]

| … 0000 | … 0001 | … 0010 | … 0011 | … 0100 | … 0101 | … 0110 | … 0111 | … 1000 | … 1001 | … 1010 | … 1011 | … 1100 | … 1101 | … 1110 | … 1111 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 000 … | 0 | 0.015625 | 0.03125 | 0.046875 | 0.0625 | 0.078125 | 0.09375 | 0.109375 | 0.125 | 0.140625 | 0.15625 | 0.171875 | 0.1875 | 0.203125 | 0.21875 | 0.234375 |

| 0 001 … | 0.25 | 0.265625 | 0.28125 | 0.296875 | 0.3125 | 0.328125 | 0.34375 | 0.359375 | 0.375 | 0.390625 | 0.40625 | 0.421875 | 0.4375 | 0.453125 | 0.46875 | 0.484375 |

| 0 010 … | 0.5 | 0.53125 | 0.5625 | 0.59375 | 0.625 | 0.65625 | 0.6875 | 0.71875 | 0.75 | 0.78125 | 0.8125 | 0.84375 | 0.875 | 0.90625 | 0.9375 | 0.96875 |

| 0 011 … | 1 | 1.0625 | 1.125 | 1.1875 | 1.25 | 1.3125 | 1.375 | 1.4375 | 1.5 | 1.5625 | 1.625 | 1.6875 | 1.75 | 1.8125 | 1.875 | 1.9375 |

| 0 100 … | 2 | 2.125 | 2.25 | 2.375 | 2.5 | 2.625 | 2.75 | 2.875 | 3 | 3.125 | 3.25 | 3.375 | 3.5 | 3.625 | 3.75 | 3.875 |

| 0 101 … | 4 | 4.25 | 4.5 | 4.75 | 5 | 5.25 | 5.5 | 5.75 | 6 | 6.25 | 6.5 | 6.75 | 7 | 7.25 | 7.5 | 7.75 |

| 0 110 … | 8 | 8.5 | 9 | 9.5 | 10 | 10.5 | 11 | 11.5 | 12 | 12.5 | 13 | 13.5 | 14 | 14.5 | 15 | 15.5 |

| 0 111 … | Inf | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 1 000 … | −0 | −0.015625 | −0.03125 | −0.046875 | −0.0625 | −0.078125 | −0.09375 | −0.109375 | −0.125 | −0.140625 | −0.15625 | −0.171875 | −0.1875 | −0.203125 | −0.21875 | −0.234375 |

| 1 001 … | −0.25 | −0.265625 | −0.28125 | −0.296875 | −0.3125 | −0.328125 | −0.34375 | −0.359375 | −0.375 | −0.390625 | −0.40625 | −0.421875 | −0.4375 | −0.453125 | −0.46875 | −0.484375 |

| 1 010 … | −0.5 | −0.53125 | −0.5625 | −0.59375 | −0.625 | −0.65625 | −0.6875 | −0.71875 | −0.75 | −0.78125 | −0.8125 | −0.84375 | −0.875 | −0.90625 | −0.9375 | −0.96875 |

| 1 011 … | −1 | −1.0625 | −1.125 | −1.1875 | −1.25 | −1.3125 | −1.375 | −1.4375 | −1.5 | −1.5625 | −1.625 | −1.6875 | −1.75 | −1.8125 | −1.875 | −1.9375 |

| 1 100 … | −2 | −2.125 | −2.25 | −2.375 | −2.5 | −2.625 | −2.75 | −2.875 | −3 | −3.125 | −3.25 | −3.375 | −3.5 | −3.625 | −3.75 | −3.875 |

| 1 101 … | −4 | −4.25 | −4.5 | −4.75 | −5 | −5.25 | −5.5 | −5.75 | −6 | −6.25 | −6.5 | −6.75 | −7 | −7.25 | −7.5 | −7.75 |

| 1 110 … | −8 | −8.5 | −9 | −9.5 | −10 | −10.5 | −11 | −11.5 | −12 | −12.5 | −13 | −13.5 | −14 | −14.5 | −15 | −15.5 |

| 1 111 … | −Inf | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

Tables like the above can be generated for any combination of SEMB (sign, exponent, mantissa/significand, and bias) values using a script in Python or in GDScript.

6-bit (1.3.2)

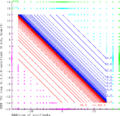

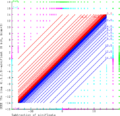

With only 64 values, it is possible to plot all the values in a diagram, which can be instructive.

These graphics demonstrates math of two 6-bit (1.3.2)-minifloats, following the rules of IEEE 754 exactly. Green X's are NaN results, Cyan X's are +Infinity results, Magenta X's are −Infinity results. The range of the finite results is filled with curves joining equal values, blue for positive and red for negative.

- Addition

- Subtraction

- Multiplication

- Division

4 bit (1.2.1)

The smallest possible float size that follows all IEEE principles, including normalized numbers, subnormal numbers, signed zero, signed infinity, and multiple NaN values, is a 4-bit float with 1-bit sign, 2-bit exponent, and 1-bit mantissa. [15]

| .000 | .001 | .010 | .011 | .100 | .101 | .110 | .111 | |

|---|---|---|---|---|---|---|---|---|

| 0... | 0 | 0.5 | 1 | 1.5 | 2 | 3 | Inf | NaN |

| 1... | −0 | −0.5 | −1 | −1.5 | −2 | −3 | −Inf | NaN |

This example 4-bit float is very similar to the FP4-E2M1 format used by Nvidia, and the IEEE P3109 binary4p2s* formats, as described above, except for a different allocation of the special Infinity and NaN values. This example uses a traditional allocation following the same principles as other float sizes. Floats intended for application-specific use cases instead of general-purpose interoperability may prefer to use those bit patterns for finite numbers, depending on the needs of the application.

3-bit (1.1.1)

If normalized numbers are not required, the size can be reduced to 3-bit by reducing the exponent down to 1.

| .00 | .01 | .10 | .11 | |

|---|---|---|---|---|

| 0... | 0 | 1 | Inf | NaN |

| 1... | −0 | −1 | −Inf | NaN |

2-bit (0.1.1) and 3-bit (0.2.1)

In situations where the sign bit can be excluded, each of the above examples can be reduced by 1 bit further, keeping only the first row of the above tables. A 2-bit float with 1-bit exponent and 1-bit mantissa would only have 0, 1, Inf, NaN values.

1-bit (0.1.0)

Removing the mantissa would allow only two values: 0 and Inf. Removing the exponent does not work, the above formulae produce 0 and sqrt(2)/2. The exponent must be at least 1 bit or else it no longer makes sense as a float (it would just be a signed number).