Character encoding is the process of assigning numbers to graphical characters, especially the written characters of human language, allowing them to be stored, transmitted, and transformed using digital computers. The numerical values that make up a character encoding are known as "code points" and collectively comprise a "code space", a "code page", or a "character map".

While Hypertext Markup Language (HTML) has been in use since 1991, HTML 4.0 from December 1997 was the first standardized version where international characters were given reasonably complete treatment. When an HTML document includes special characters outside the range of seven-bit ASCII, two goals are worth considering: the information's integrity, and universal browser display.

UTF-8 is a variable-length character encoding standard used for electronic communication. Defined by the Unicode Standard, the name is derived from Unicode Transformation Format – 8-bit.

UTF-16 (16-bit Unicode Transformation Format) is a character encoding capable of encoding all 1,112,064 valid code points of Unicode (in fact this number of code points is dictated by the design of UTF-16). The encoding is variable-length, as code points are encoded with one or two 16-bit code units. UTF-16 arose from an earlier obsolete fixed-width 16-bit encoding now known as UCS-2 (for 2-byte Universal Character Set), once it became clear that more than 216 (65,536) code points were needed, including most emoji and important CJK characters such as for personal and place names.

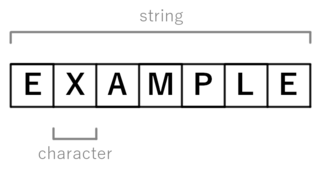

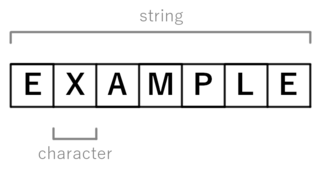

In computer and machine-based telecommunications terminology, a character is a unit of information that roughly corresponds to a grapheme, grapheme-like unit, or symbol, such as in an alphabet or syllabary in the written form of a natural language.

The byte order mark (BOM) is a particular usage of the special Unicode character, U+FEFFZERO WIDTH NO-BREAK SPACE, whose appearance as a magic number at the start of a text stream can signal several things to a program reading the text:

Big-5 or Big5 is a Chinese character encoding method used in Taiwan, Hong Kong, and Macau for traditional Chinese characters.

UTF-32 (32-bit Unicode Transformation Format) is a fixed-length encoding used to encode Unicode code points that uses exactly 32 bits (four bytes) per code point (but a number of leading bits must be zero as there are far fewer than 232 Unicode code points, needing actually only 21 bits). UTF-32 is a fixed-length encoding, in contrast to all other Unicode transformation formats, which are variable-length encodings. Each 32-bit value in UTF-32 represents one Unicode code point and is exactly equal to that code point's numerical value.

A double-byte character set (DBCS) is a character encoding in which either all characters are encoded in two bytes, or merely every graphic character not representable by an accompanying single-byte character set (SBCS) is encoded in two bytes. A DBCS supports national languages that contain many unique characters or symbols. Examples of such languages include Japanese and Chinese. Korean Hangul does not contain as many characters, but KS X 1001 supports both Hangul and Hanja, and uses two bytes per character.

In computing, JIS encoding refers to several Japanese Industrial Standards for encoding the Japanese language. Strictly speaking, the term means either:

ISO/IEC 2022Information technology—Character code structure and extension techniques, is an ISO/IEC standard in the field of character encoding. It is equivalent to the ECMA standard ECMA-35, the ANSI standard ANSI X3.41 and the Japanese Industrial Standard JIS X 0202. Originating in 1971, it was most recently revised in 1994.

Extended Unix Code (EUC) is a multibyte character encoding system used primarily for Japanese, Korean, and simplified Chinese (characters).

A numeric character reference (NCR) is a common markup construct used in SGML and SGML-derived markup languages such as HTML and XML. It consists of a short sequence of characters that, in turn, represents a single character. Since WebSgml, XML and HTML 4, the code points of the Universal Character Set (UCS) of Unicode are used. NCRs are typically used in order to represent characters that are not directly encodable in a particular document. When the document is interpreted by a markup-aware reader, each NCR is treated as if it were the character it represents.

A wide character is a computer character datatype that generally has a size greater than the traditional 8-bit character. The increased datatype size allows for the use of larger coded character sets.

UTF-EBCDIC is a character encoding capable of encoding all 1,112,064 valid character code points in Unicode using one to five one-byte (8-bit) code units. It is meant to be EBCDIC-friendly, so that legacy EBCDIC applications on mainframes may process the characters without much difficulty. Its advantages for existing EBCDIC-based systems are similar to UTF-8's advantages for existing ASCII-based systems. Details on UTF-EBCDIC are defined in Unicode Technical Report #16.

This article compares Unicode encodings. Two situations are considered: 8-bit-clean environments, and environments that forbid use of byte values that have the high bit set. Originally such prohibitions were to allow for links that used only seven data bits, but they remain in some standards and so some standard-conforming software must generate messages that comply with the restrictions. Standard Compression Scheme for Unicode and Binary Ordered Compression for Unicode are excluded from the comparison tables because it is difficult to simply quantify their size.

UTF-1 is a method of transforming ISO/IEC 10646/Unicode into a stream of bytes. Its design does not provide self-synchronization, which makes searching for substrings and error recovery difficult. It reuses the ASCII printing characters for multi-byte encodings, making it unsuited for some uses. UTF-1 is also slow to encode or decode due to its use of division and multiplication by a number which is not a power of 2. Due to these issues, it did not gain acceptance and was quickly replaced by UTF-8.

The MARC-8 charset is a MARC standard used in MARC-21 library records. The MARC formats are standards for the representation and communication of bibliographic and related information in machine-readable form, and they are frequently used in library database systems. The character encoding now known as MARC-8 was introduced in 1968 as part of the MARC format. Originally based on the Latin alphabet, from 1979 to 1983 the JACKPHY initiative expanded the repertoire to include Japanese, Arabic, Chinese, and Hebrew characters, with the later addition of Cyrillic and Greek scripts. If a character is not representable in MARC-8 of a MARC-21 record, then UTF-8 must be used instead. UTF-8 has support for many more characters than MARC-8, which is rarely used outside library data.

IBM code page 949 (IBM-949) is a character encoding which has been used by IBM to represent Korean language text on computers. It is a variable-width encoding which represents the characters from the Wansung code defined by the South Korean standard KS X 1001 in a format compatible with EUC-KR, but adds IBM extensions for additional hanja, additional precomposed Hangul syllables, and user-defined characters.