China

The roots of predictive policing can be traced to the policy approach of social governance, in which leader of the Chinese Communist Party Xi Jinping announced at a security conference in 2016 is the Chinese regime's agenda to promote a harmonious and prosperous country through an extensive use of information systems. [11] A common instance of social governance is the development of the social credit system, where big data is used to digitize identities and quantify trustworthiness. There is no other comparably comprehensive and institutionalized system of citizen assessment in the West. [12]

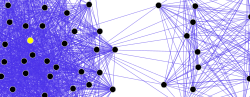

The increase in collecting and assessing aggregate public and private information by China's police force to analyze past crime and forecast future criminal activity is part of the government's mission to promote social stability by converting intelligence-led policing (i.e. effectively using information) into informatization (i.e. using information technologies) of policing. [11] The increase in employment of big data through the police geographical information system (PGIS) is within China's promise to better coordinate information resources across departments and regions to transform analysis of past crime patterns and trends into automated prevention and suppression of crime. [13] [14] PGIS was first introduced in 1970s and was originally used for internal government management and research institutions for city surveying and planning. Since the mid-1990s PGIS has been introduced into the Chinese public security industry to empower law enforcement by promoting police collaboration and resource sharing. [13] [15] The current applications of PGIS are still contained within the stages of public map services, spatial queries, and hot spot mapping. Its application in crime trajectory analysis and prediction is still in the exploratory stage; however, the promotion of informatization of policing has encouraged cloud-based upgrades to PGIS design, fusion of multi-source spatiotemporal data, and developments to police spatiotemporal big data analysis and visualization. [16]

Although there is no nationwide police prediction program in China, local projects between 2015 and 2018 have also been undertaken in regions such as Zhejiang, Guangdong, Suzhou, and Xinjiang, that are either advertised as or are building blocks towards a predictive policing system. [11] [17]

Zhejiang and Guangdong had established prediction and prevention of telecommunication fraud through the real-time collection and surveillance of suspicious online or telecommunication activities and the collaboration with private companies such as the Alibaba Group for the identification of potential suspects. [18] The predictive policing and crime prevention operation involves forewarning to specific victims, with 9,120 warning calls being made in 2018 by the Zhongshan police force along with direct interception of over 13,000 telephone calls and over 30,000 text messages in 2017. [11]

Substance-related crime is also investigated in Guangdong, specifically the Zhongshan police force who were the first city in 2017 to utilize wastewater analysis and data models that included water and electricity usage to locate hotspots for drug crime. This method led to the arrest of 341 suspects in 45 different criminal investigations by 2019. [19]

In China, Suzhou Police Bureau has adopted predictive policing since 2013. During 2015–2018, several cities in China have adopted predictive policing. [20] China has used predictive policing to identify and target people for sent to Xinjiang internment camps. [21] [22]

The integrated joint operations platform (IJOP) predictive policing system is operated by the Central Political and Legal Affairs Commission. [23]

United States

In the United States, the practice of predictive policing has been implemented by police departments in several states such as California, Washington, South Carolina, Alabama, Arizona, Tennessee, New York, and Illinois. [25] [26]

In New York, the NYPD has begun implementing a new crime tracking program called Patternizr. The goal of the Patternizr was to help aid police officers in identifying commonalities in crimes committed by the same offenders or same group of offenders. With the help of the Patternizr, officers are able to save time and be more efficient as the program generates the possible "pattern" of different crimes. The officer then has to manually search through the possible patterns to see if the generated crimes are related to the current suspect. If the crimes do match, the officer will launch a deeper investigation into the pattern crimes. [27]