The context algorithm

In the article A Universal Data Compression System, [1] Rissanen introduced a consistent algorithm to estimate the probabilistic context tree that generates the data. This algorithm's function can be summarized in two steps:

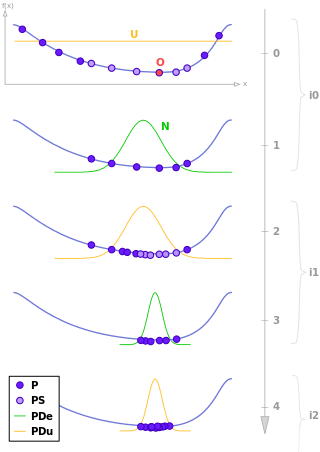

- Given the sample produced by a chain with memory of variable length, we start with the maximum tree whose branches are all the candidates to contexts to the sample;

- The branches in this tree are then cut until you obtain the smallest tree that's well adapted to the data. Deciding whether or not shortening the context is done through a given gain function, such as the ratio of the log-likelihood.

Be  a sample of a finite probabilistic tree

a sample of a finite probabilistic tree  . For any sequence

. For any sequence  with

with  , it is possible to denote by

, it is possible to denote by  the number of occurrences of the sequence in the sample, i.e.,

the number of occurrences of the sequence in the sample, i.e.,

Rissanen first built a context maximum candidate, given by  , where

, where  and

and  is an arbitrary positive constant. The intuitive reason for the choice of

is an arbitrary positive constant. The intuitive reason for the choice of  comes from the impossibility of estimating the probabilities of sequence with lengths greater than

comes from the impossibility of estimating the probabilities of sequence with lengths greater than  based in a sample of size

based in a sample of size  .

.

From there, Rissanen shortens the maximum candidate through successive cutting the branches according to a sequence of tests based in statistical likelihood ratio. In a more formal definition, if bANnxk1b0 define the probability estimator of the transition probability  by

by

where  . If

. If  , define

, define  .

.

To  , define

, define

where  and

and

Note that  is the ratio of the log-likelihood to test the consistency of the sample with the probabilistic context tree

is the ratio of the log-likelihood to test the consistency of the sample with the probabilistic context tree  against the alternative that is consistent with

against the alternative that is consistent with  , where

, where  and

and  differ only by a set of sibling knots.

differ only by a set of sibling knots.

The length of the current estimated context is defined by

where  is any positive constant. At last, by Rissanen, [1] there's the following result. Given

is any positive constant. At last, by Rissanen, [1] there's the following result. Given  of a finite probabilistic context tree

of a finite probabilistic context tree  , then

, then

when  .

.