Processor design is a subfield of computer science and computer engineering (fabrication) that deals with creating a processor, a key component of computer hardware.

An integrated circuit is a set of electronic circuits on one small flat piece of semiconductor material, usually silicon. In an IC, a large numbers of miniaturized transistors and other electronic components are integrated together on the chip. This results in circuits that are orders of magnitude smaller, faster, and less expensive than those constructed of discrete components, allowing a large transistor count.

Semiconductor device fabrication is the process used to manufacture semiconductor devices, typically integrated circuits (ICs) such as computer processors, microcontrollers, and memory chips that are present in everyday electrical and electronic devices. It is a multiple-step photolithographic and physio-chemical process during which electronic circuits are gradually created on a wafer, typically made of pure single-crystal semiconducting material. Silicon is almost always used, but various compound semiconductors are used for specialized applications.

The metal–oxide–semiconductor field-effect transistor is a type of field-effect transistor (FET), most commonly fabricated by the controlled oxidation of silicon. It has an insulated gate, the voltage of which determines the conductivity of the device. This ability to change conductivity with the amount of applied voltage can be used for amplifying or switching electronic signals. The term metal–insulator–semiconductor field-effect transistor (MISFET) is almost synonymous with MOSFET. Another near-synonym is insulated-gate field-effect transistor (IGFET).

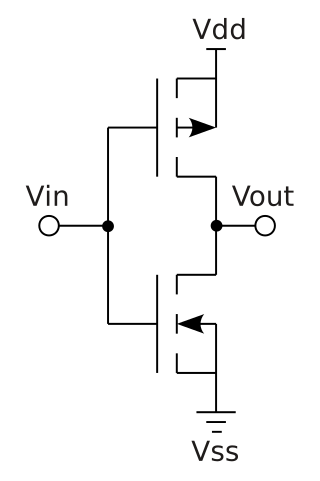

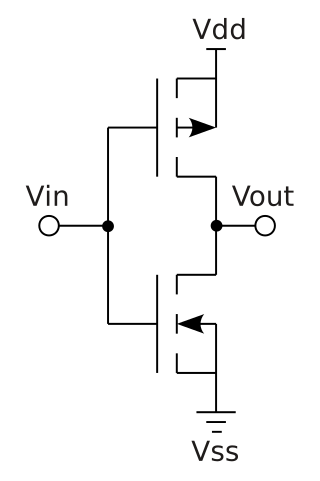

Complementary metal–oxide–semiconductor is a type of metal–oxide–semiconductor field-effect transistor (MOSFET) fabrication process that uses complementary and symmetrical pairs of p-type and n-type MOSFETs for logic functions. CMOS technology is used for constructing integrated circuit (IC) chips, including microprocessors, microcontrollers, memory chips, and other digital logic circuits. CMOS technology is also used for analog circuits such as image sensors, data converters, RF circuits, and highly integrated transceivers for many types of communication.

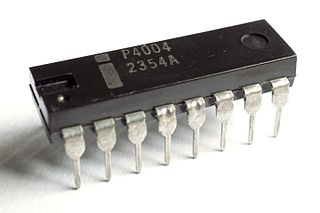

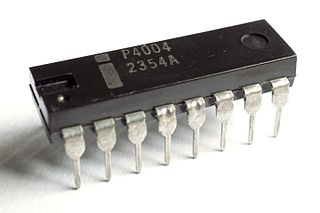

The Intel 4004 is a 4-bit central processing unit (CPU) released by Intel Corporation in 1971. Sold for US$60, it was the first commercially produced microprocessor, and the first in a long line of Intel CPUs.

An application-specific integrated circuit is an integrated circuit (IC) chip customized for a particular use, rather than intended for general-purpose use, such as a chip designed to run in a digital voice recorder or a high-efficiency video codec. Application-specific standard product chips are intermediate between ASICs and industry standard integrated circuits like the 7400 series or the 4000 series. ASIC chips are typically fabricated using metal–oxide–semiconductor (MOS) technology, as MOS integrated circuit chips.

Bipolar CMOS (BiCMOS) is a semiconductor technology that integrates two semiconductor technologies, those of the bipolar junction transistor and the CMOS logic gate, into a single integrated circuit. In more recent times the bipolar processes have been extended to include high mobility devices using silicon–germanium junctions.

In computer engineering, a logic family is one of two related concepts:

Miniaturization is the trend to manufacture ever-smaller mechanical, optical, and electronic products and devices. Examples include miniaturization of mobile phones, computers and vehicle engine downsizing. In electronics, the exponential scaling and miniaturization of silicon MOSFETs leads to the number of transistors on an integrated circuit chip doubling every two years, an observation known as Moore's law. This leads to MOS integrated circuits such as microprocessors and memory chips being built with increasing transistor density, faster performance, and lower power consumption, enabling the miniaturization of electronic devices.

A mixed-signal integrated circuit is any integrated circuit that has both analog circuits and digital circuits on a single semiconductor die. Their usage has grown dramatically with the increased use of cell phones, telecommunications, portable electronics, and automobiles with electronics and digital sensors.

In integrated circuits, depletion-load NMOS is a form of digital logic family that uses only a single power supply voltage, unlike earlier NMOS logic families that needed more than one different power supply voltage. Although manufacturing these integrated circuits required additional processing steps, improved switching speed and the elimination of the extra power supply made this logic family the preferred choice for many microprocessors and other logic elements.

Integrated circuit design, or IC design, is a sub-field of electronics engineering, encompassing the particular logic and circuit design techniques required to design integrated circuits, or ICs. ICs consist of miniaturized electronic components built into an electrical network on a monolithic semiconductor substrate by photolithography.

In semiconductor electronics fabrication technology, a self-aligned gate is a transistor manufacturing approach whereby the gate electrode of a MOSFET is used as a mask for the doping of the source and drain regions. This technique ensures that the gate is naturally and precisely aligned to the edges of the source and drain.

Four-phase logic is a type of, and design methodology for dynamic logic. It enabled non-specialist engineers to design quite complex ICs, using either PMOS or NMOS processes. It uses a kind of 4-phase clock signal.

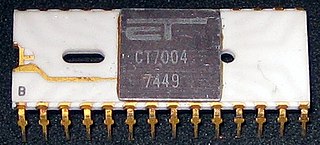

PMOS or pMOS logic is a family of digital circuits based on p-channel, enhancement mode metal–oxide–semiconductor field-effect transistors (MOSFETs). In the late 1960s and early 1970s, PMOS logic was the dominant semiconductor technology for large-scale integrated circuits before being superseded by NMOS and CMOS devices.

A three-dimensional integrated circuit is a MOS integrated circuit (IC) manufactured by stacking as many as 16 or more ICs and interconnecting them vertically using, for instance, through-silicon vias (TSVs) or Cu-Cu connections, so that they behave as a single device to achieve performance improvements at reduced power and smaller footprint than conventional two dimensional processes. The 3D IC is one of several 3D integration schemes that exploit the z-direction to achieve electrical performance benefits in microelectronics and nanoelectronics.

In semiconductor manufacturing, a process corner is an example of a design-of-experiments (DoE) technique that refers to a variation of fabrication parameters used in applying an integrated circuit design to a semiconductor wafer. Process corners represent the extremes of these parameter variations within which a circuit that has been etched onto the wafer must function correctly. A circuit running on devices fabricated at these process corners may run slower or faster than specified and at lower or higher temperatures and voltages, but if the circuit does not function at all at any of these process extremes the design is considered to have inadequate design margin.

Glossary of microelectronics manufacturing terms