Game theory is the study of mathematical models of strategic interactions. It has applications in many fields of social science, and is used extensively in economics, logic, systems science and computer science. Initially, game theory addressed two-person zero-sum games, in which a participant's gains or losses are exactly balanced by the losses and gains of the other participant. In the 1950s, it was extended to the study of non zero-sum games, and was eventually applied to a wide range of behavioral relations. It is now an umbrella term for the science of rational decision making in humans, animals, and computers.

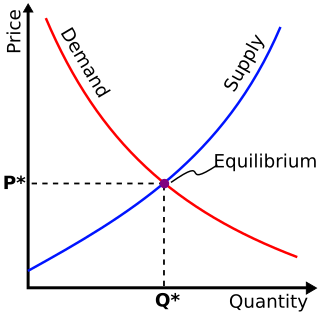

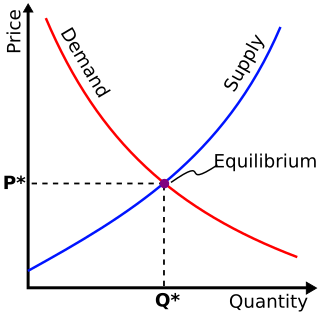

In game theory, the Nash equilibrium is the most commonly-used solution concept for non-cooperative games. A Nash equilibrium is a situation where no player could gain by changing their own strategy. The idea of Nash equilibrium dates back to the time of Cournot, who in 1838 applied it to his model of competition in an oligopoly.

In economics, perfect information is a feature of perfect competition. With perfect information in a market, all consumers and producers have complete and instantaneous knowledge of all market prices, their own utility, and own cost functions.

In game theory, the best response is the strategy which produces the most favorable outcome for a player, taking other players' strategies as given. The concept of a best response is central to John Nash's best-known contribution, the Nash equilibrium, the point at which each player in a game has selected the best response to the other players' strategies.

In game theory, a signaling game is a simple type of a dynamic Bayesian game.

In game theory, a move, action, or play is any one of the options which a player can choose in a setting where the optimal outcome depends not only on their own actions but on the actions of others. The discipline mainly concerns the action of a player in a game affecting the behavior or actions of other players. Some examples of "games" include chess, bridge, poker, monopoly, diplomacy or battleship.

In game theory, a solution concept is a formal rule for predicting how a game will be played. These predictions are called "solutions", and describe which strategies will be adopted by players and, therefore, the result of the game. The most commonly used solution concepts are equilibrium concepts, most famously Nash equilibrium.

In game theory, an extensive-form game is a specification of a game allowing for the explicit representation of a number of key aspects, like the sequencing of players' possible moves, their choices at every decision point, the information each player has about the other player's moves when they make a decision, and their payoffs for all possible game outcomes. Extensive-form games also allow for the representation of incomplete information in the form of chance events modeled as "moves by nature". Extensive-form representations differ from normal-form in that they provide a more complete description of the game in question, whereas normal-form simply boils down the game into a payoff matrix.

In game theory, a Perfect Bayesian Equilibrium (PBE) is a solution with Bayesian probability to a turn-based game with incomplete information. More specifically, it is an equilibrium concept that uses Bayesian updating to describe player behavior in dynamic games with incomplete information. Perfect Bayesian equilibria are used to solve the outcome of games where players take turns but are unsure of the "type" of their opponent, which occurs when players don't know their opponent's preference between individual moves. A classic example of a dynamic game with types is a war game where the player is unsure whether their opponent is a risk-taking "hawk" type or a pacifistic "dove" type. Perfect Bayesian Equilibria are a refinement of Bayesian Nash equilibrium (BNE), which is a solution concept with Bayesian probability for non-turn-based games.

In game theory, a Bayesian game is a strategic decision-making model which assumes players have incomplete information. Players may hold private information relevant to the game, meaning that the payoffs are not common knowledge. Bayesian games model the outcome of player interactions using aspects of Bayesian probability. They are notable because they allowed, for the first time in game theory, for the specification of the solutions to games with incomplete information.

In game theory, normal form is a description of a game. Unlike extensive form, normal-form representations are not graphical per se, but rather represent the game by way of a matrix. While this approach can be of greater use in identifying strictly dominated strategies and Nash equilibria, some information is lost as compared to extensive-form representations. The normal-form representation of a game includes all perceptible and conceivable strategies, and their corresponding payoffs, for each player.

In game theory, a strategy Adominates another strategy B if A will always produces a better result than B, regardless of how any other player plays no matter how that player's opponent or opponents play. Some very simple games can be solved using dominance.

In game theory, folk theorems are a class of theorems describing an abundance of Nash equilibrium payoff profiles in repeated games. The original Folk Theorem concerned the payoffs of all the Nash equilibria of an infinitely repeated game. This result was called the Folk Theorem because it was widely known among game theorists in the 1950s, even though no one had published it. Friedman's (1971) Theorem concerns the payoffs of certain subgame-perfect Nash equilibria (SPE) of an infinitely repeated game, and so strengthens the original Folk Theorem by using a stronger equilibrium concept: subgame-perfect Nash equilibria rather than Nash equilibria.

In game theory, a repeated game is an extensive form game that consists of a number of repetitions of some base game. The stage game is usually one of the well-studied 2-person games. Repeated games capture the idea that a player will have to take into account the impact of their current action on the future actions of other players; this impact is sometimes called their reputation. Single stage game or single shot game are names for non-repeated games.

In game theory, the war of attrition is a dynamic timing game in which players choose a time to stop, and fundamentally trade off the strategic gains from outlasting other players and the real costs expended with the passage of time. Its precise opposite is the pre-emption game, in which players elect a time to stop, and fundamentally trade off the strategic costs from outlasting other players and the real gains occasioned by the passage of time. The model was originally formulated by John Maynard Smith; a mixed evolutionarily stable strategy (ESS) was determined by Bishop & Cannings. An example is a second price all-pay auction, in which the prize goes to the player with the highest bid and each player pays the loser's low bid.

In game theory, a correlated equilibrium is a solution concept that is more general than the well known Nash equilibrium. It was first discussed by mathematician Robert Aumann in 1974. The idea is that each player chooses their action according to their private observation of the value of the same public signal. A strategy assigns an action to every possible observation a player can make. If no player would want to deviate from their strategy, the distribution from which the signals are drawn is called a correlated equilibrium.

In game theory, the purification theorem was contributed by Nobel laureate John Harsanyi in 1973. The theorem justifies a puzzling aspect of mixed strategy Nash equilibria: each player is wholly indifferent between each of the actions he puts non-zero weight on, yet he mixes them so as to make every other player also indifferent.

In game theory, a subgame perfect equilibrium is a refinement of a Nash equilibrium used in dynamic games. A strategy profile is a subgame perfect equilibrium if it represents a Nash equilibrium of every subgame of the original game. Informally, this means that at any point in the game, the players' behavior from that point onward should represent a Nash equilibrium of the continuation game, no matter what happened before. Every finite extensive game with perfect recall has a subgame perfect equilibrium. Perfect recall is a term introduced by Harold W. Kuhn in 1953 and "equivalent to the assertion that each player is allowed by the rules of the game to remember everything he knew at previous moves and all of his choices at those moves".

Jean-François Mertens was a Belgian game theorist and mathematical economist.