Analysis of variance (ANOVA) is a collection of statistical models and their associated estimation procedures used to analyze the differences among means. ANOVA was developed by the statistician Ronald Fisher. ANOVA is based on the law of total variance, where the observed variance in a particular variable is partitioned into components attributable to different sources of variation. In its simplest form, ANOVA provides a statistical test of whether two or more population means are equal, and therefore generalizes the t-test beyond two means. In other words, the ANOVA is used to test the difference between two or more means.

An F-test is any statistical test used to compare the variances of two samples or the ratio of variances between multiple samples. The test statistic, random variable F, is used to determine if the tested data has an F-distribution under the true null hypothesis, and true customary assumptions about the error term (ε). It is most often used when comparing statistical models that have been fitted to a data set, in order to identify the model that best fits the population from which the data were sampled. Exact "F-tests" mainly arise when the models have been fitted to the data using least squares. The name was coined by George W. Snedecor, in honour of Ronald Fisher. Fisher initially developed the statistic as the variance ratio in the 1920s.

Analysis of covariance (ANCOVA) is a general linear model that blends ANOVA and regression. ANCOVA evaluates whether the means of a dependent variable (DV) are equal across levels of one or more categorical independent variables (IV) and across one or more continuous variables. For example, the categorical variable(s) might describe treatment and the continuous variable(s) might be covariates or nuisance variables; or vice versa. Mathematically, ANCOVA decomposes the variance in the DV into variance explained by the CV(s), variance explained by the categorical IV, and residual variance. Intuitively, ANCOVA can be thought of as 'adjusting' the DV by the group means of the CV(s).

In statistics, multivariate analysis of variance (MANOVA) is a procedure for comparing multivariate sample means. As a multivariate procedure, it is used when there are two or more dependent variables, and is often followed by significance tests involving individual dependent variables separately.

Mathematical statistics is the application of probability theory, a branch of mathematics, to statistics, as opposed to techniques for collecting statistical data. Specific mathematical techniques which are used for this include mathematical analysis, linear algebra, stochastic analysis, differential equations, and measure theory.

In medicine, a crossover study or crossover trial is a longitudinal study in which subjects receive a sequence of different treatments. While crossover studies can be observational studies, many important crossover studies are controlled experiments, which are discussed in this article. Crossover designs are common for experiments in many scientific disciplines, for example psychology, pharmaceutical science, and medicine.

Multilevel models are statistical models of parameters that vary at more than one level. An example could be a model of student performance that contains measures for individual students as well as measures for classrooms within which the students are grouped. These models can be seen as generalizations of linear models, although they can also extend to non-linear models. These models became much more popular after sufficient computing power and software became available.

A mixed model, mixed-effects model or mixed error-component model is a statistical model containing both fixed effects and random effects. These models are useful in a wide variety of disciplines in the physical, biological and social sciences. They are particularly useful in settings where repeated measurements are made on the same statistical units, or where measurements are made on clusters of related statistical units. Mixed models are often preferred over traditional analysis of variance regression models because they don't rely on the independent observations assumption. Further, they have their flexibility in dealing with missing values and uneven spacing of repeated measurements. The Mixed model analysis allows measurements to be explicitly modeled in a wider variety of correlation and variance-covariance avoiding biased estimations. structures.

Pseudoreplication has many definitions. Pseudoreplication was originally defined in 1984 by Stuart H. Hurlbert as the use of inferential statistics to test for treatment effects with data from experiments where either treatments are not replicated or replicates are not statistically independent. Subsequently, Millar and Anderson identified it as a special case of inadequate specification of random factors where both random and fixed factors are present. It is sometimes narrowly interpreted as an inflation of the number of samples or replicates which are not statistically independent. This definition omits the confounding of unit and treatment effects in a misspecified F-ratio. In practice, incorrect F-ratios for statistical tests of fixed effects often arise from a default F-ratio that is formed over the error rather the mixed term.

Mauchly's sphericity test or Mauchly's W is a statistical test used to validate a repeated measures analysis of variance (ANOVA). It was developed in 1940 by John Mauchly.

In statistics, one-way analysis of variance is a technique to compare whether two or more samples' means are significantly different. This analysis of variance technique requires a numeric response variable "Y" and a single explanatory variable "X", hence "one-way".

Multivariate analysis of covariance (MANCOVA) is an extension of analysis of covariance (ANCOVA) methods to cover cases where there is more than one dependent variable and where the control of concomitant continuous independent variables – covariates – is required. The most prominent benefit of the MANCOVA design over the simple MANOVA is the 'factoring out' of noise or error that has been introduced by the covariant. A commonly used multivariate version of the ANOVA F-statistic is Wilks' Lambda (Λ), which represents the ratio between the error variance and the effect variance.

In statistics, restricted randomization occurs in the design of experiments and in particular in the context of randomized experiments and randomized controlled trials. Restricted randomization allows intuitively poor allocations of treatments to experimental units to be avoided, while retaining the theoretical benefits of randomization. For example, in a clinical trial of a new proposed treatment of obesity compared to a control, an experimenter would want to avoid outcomes of the randomization in which the new treatment was allocated only to the heaviest patients.

In statistics, a mixed-design analysis of variance model, also known as a split-plot ANOVA, is used to test for differences between two or more independent groups whilst subjecting participants to repeated measures. Thus, in a mixed-design ANOVA model, one factor is a between-subjects variable and the other is a within-subjects variable. Thus, overall, the model is a type of mixed-effects model.

In randomized statistical experiments, generalized randomized block designs (GRBDs) are used to study the interaction between blocks and treatments. For a GRBD, each treatment is replicated at least two times in each block; this replication allows the estimation and testing of an interaction term in the linear model.

In statistics, one purpose for the analysis of variance (ANOVA) is to analyze differences in means between groups. The test statistic, F, assumes independence of observations, homogeneous variances, and population normality. ANOVA on ranks is a statistic designed for situations when the normality assumption has been violated.

In statistics, the two-way analysis of variance (ANOVA) is an extension of the one-way ANOVA that examines the influence of two different categorical independent variables on one continuous dependent variable. The two-way ANOVA not only aims at assessing the main effect of each independent variable but also if there is any interaction between them.

One application of multilevel modeling (MLM) is the analysis of repeated measures data. Multilevel modeling for repeated measures data is most often discussed in the context of modeling change over time ; however, it may also be used for repeated measures data in which time is not a factor.

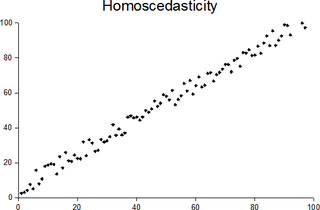

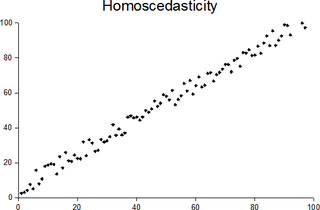

In statistics, a sequence of random variables is homoscedastic if all its random variables have the same finite variance; this is also known as homogeneity of variance. The complementary notion is called heteroscedasticity, also known as heterogeneity of variance. The spellings homoskedasticity and heteroskedasticity are also frequently used. Skedasticity comes from the Ancient Greek word skedánnymi, meaning “to scatter”. Assuming a variable is homoscedastic when in reality it is heteroscedastic results in unbiased but inefficient point estimates and in biased estimates of standard errors, and may result in overestimating the goodness of fit as measured by the Pearson coefficient.