The variance function and its applications come up in many areas of statistical analysis. A very important use of this function is in the framework of generalized linear models and non-parametric regression.

Generalized linear model

When a member of the exponential family has been specified, the variance function can easily be derived. [4] : 29 The general form of the variance function is presented under the exponential family context, as well as specific forms for Normal, Bernoulli, Poisson, and Gamma. In addition, we describe the applications and use of variance functions in maximum likelihood estimation and quasi-likelihood estimation.

Derivation

The generalized linear model (GLM), is a generalization of ordinary regression analysis that extends to any member of the exponential family. It is particularly useful when the response variable is categorical, binary or subject to a constraint (e.g. only positive responses make sense). A quick summary of the components of a GLM are summarized on this page, but for more details and information see the page on generalized linear models.

A GLM consists of three main ingredients:

- 1. Random Component: a distribution of y from the exponential family,

- 2. Linear predictor:

- 3. Link function:

First it is important to derive a couple key properties of the exponential family.

Any random variable  in the exponential family has a probability density function of the form,

in the exponential family has a probability density function of the form,

with loglikelihood,

Here,  is the canonical parameter and the parameter of interest, and

is the canonical parameter and the parameter of interest, and  is a nuisance parameter which plays a role in the variance. We use the Bartlett's Identities to derive a general expression for the variance function. The first and second Bartlett results ensures that under suitable conditions (see Leibniz integral rule), for a density function dependent on

is a nuisance parameter which plays a role in the variance. We use the Bartlett's Identities to derive a general expression for the variance function. The first and second Bartlett results ensures that under suitable conditions (see Leibniz integral rule), for a density function dependent on  ,

,

These identities lead to simple calculations of the expected value and variance of any random variable  in the exponential family

in the exponential family  .

.

Expected value of Y: Taking the first derivative with respect to  of the log of the density in the exponential family form described above, we have

of the log of the density in the exponential family form described above, we have

Then taking the expected value and setting it equal to zero leads to,

Variance of Y: To compute the variance we use the second Bartlett identity,

We have now a relationship between  and

and  , namely

, namely

and

and  , which allows for a relationship between

, which allows for a relationship between  and the variance,

and the variance,

Note that because  , then

, then  is invertible. We derive the variance function for a few common distributions.

is invertible. We derive the variance function for a few common distributions.

Example – normal

The normal distribution is a special case where the variance function is a constant. Let  then we put the density function of y in the form of the exponential family described above:

then we put the density function of y in the form of the exponential family described above:

where

To calculate the variance function  , we first express

, we first express  as a function of

as a function of  . Then we transform

. Then we transform  into a function of

into a function of

Therefore, the variance function is constant.

Application – weighted least squares

A very important application of the variance function is its use in parameter estimation and inference when the response variable is of the required exponential family form as well as in some cases when it is not (which we will discuss in quasi-likelihood). Weighted least squares (WLS) is a special case of generalized least squares. Each term in the WLS criterion includes a weight that determines that the influence each observation has on the final parameter estimates. As in regular least squares, the goal is to estimate the unknown parameters in the regression function by finding values for parameter estimates that minimize the sum of the squared deviations between the observed responses and the functional portion of the model.

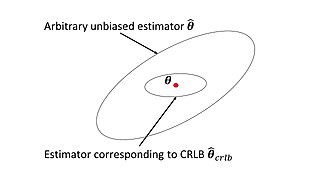

While WLS assumes independence of observations it does not assume equal variance and is therefore a solution for parameter estimation in the presence of heteroscedasticity. The Gauss–Markov theorem and Aitken demonstrate that the best linear unbiased estimator (BLUE), the unbiased estimator with minimum variance, has each weight equal to the reciprocal of the variance of the measurement.

In the GLM framework, our goal is to estimate parameters  , where

, where  . Therefore, we would like to minimize

. Therefore, we would like to minimize  and if we define the weight matrix W as

and if we define the weight matrix W as

where  are defined in the previous section, it allows for iteratively reweighted least squares (IRLS) estimation of the parameters. See the section on iteratively reweighted least squares for more derivation and information.

are defined in the previous section, it allows for iteratively reweighted least squares (IRLS) estimation of the parameters. See the section on iteratively reweighted least squares for more derivation and information.

Also, important to note is that when the weight matrix is of the form described here, minimizing the expression  also minimizes the Pearson distance. See Distance correlation for more.

also minimizes the Pearson distance. See Distance correlation for more.

The matrix W falls right out of the estimating equations for estimation of  . Maximum likelihood estimation for each parameter

. Maximum likelihood estimation for each parameter  , requires

, requires

, where

, where  is the log-likelihood.

is the log-likelihood.

Looking at a single observation we have,

This gives us

, and noting that

, and noting that we have that

we have that

The Hessian matrix is determined in a similar manner and can be shown to be,

Noticing that the Fisher Information (FI),

, allows for asymptotic approximation of

, allows for asymptotic approximation of

, and hence inference can be performed.

, and hence inference can be performed.

Application – quasi-likelihood

Because most features of GLMs only depend on the first two moments of the distribution, rather than the entire distribution, the quasi-likelihood can be developed by just specifying a link function and a variance function. That is, we need to specify

- the link function,

- the variance function,

, where the

, where the

With a specified variance function and link function we can develop, as alternatives to the log-likelihood function, the score function, and the Fisher information, a quasi-likelihood , a quasi-score, and the quasi-information. This allows for full inference of  .

.

Quasi-likelihood (QL)

Though called a quasi-likelihood, this is in fact a quasi-log-likelihood. The QL for one observation is

And therefore the QL for all n observations is

From the QL we have the quasi-score

Quasi-score (QS)

Recall the score function, U, for data with log-likelihood  is

is

We obtain the quasi-score in an identical manner,

Noting that, for one observation the score is

The first two Bartlett equations are satisfied for the quasi-score, namely

and

In addition, the quasi-score is linear in y.

Ultimately the goal is to find information about the parameters of interest  . Both the QS and the QL are actually functions of

. Both the QS and the QL are actually functions of  . Recall,

. Recall,  , and

, and  , therefore,

, therefore,

Quasi-information (QI)

The quasi-information, is similar to the Fisher information,

QL, QS, QI as functions of

The QL, QS and QI all provide the building blocks for inference about the parameters of interest and therefore it is important to express the QL, QS and QI all as functions of  .

.

Recalling again that  , we derive the expressions for QL, QS and QI parametrized under

, we derive the expressions for QL, QS and QI parametrized under  .

.

Quasi-likelihood in  ,

,

The QS as a function of  is therefore

is therefore

Where,

The quasi-information matrix in  is,

is,

Obtaining the score function and the information of  allows for parameter estimation and inference in a similar manner as described in Application – weighted least squares.

allows for parameter estimation and inference in a similar manner as described in Application – weighted least squares.