This article needs additional citations for verification .(January 2025) |

Video is an electronic medium used for the recording, copying, playback, transmission, and display of moving visual images, with or without accompanying audio. Video technology was initially developed for live transmission and later expanded to include recording and storage through analog formats such as magnetic tape. Since the late 20th century, digital video has become the dominant form, enabling efficient compression, storage, editing, and distribution across broadcast television, physical media, and internet-based platforms. Advances in digital imaging, compression standards, and network infrastructure have significantly influenced media production, communication, entertainment, education, and information dissemination worldwide.

Contents

- Etymology

- History

- Analog video

- Digital video

- Characteristics

- Frame rate

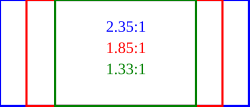

- Aspect ratio

- Color model and depth

- Quality

- Compression (digital only)

- Stereoscopy

- Formats

- Analog video 2

- Digital video 2

- Transport medium

- Display standards

- Digital television

- Analog television

- Computer displays

- Recording

- Digital encoding formats

- See also

- References

- External links

Video systems vary in display resolution, aspect ratio, refresh rate, color reproduction, and other qualities. Both analog and digital video can be carried on a variety of media, including radio, magnetic tape, optical discs, computer files, and network streaming.